The Terminal (CLI) vs. The Protocol (MCP): 5 Counter-Intuitive Truths About the Future of AI Tooling

The question for every CTO in 2026 is simple: Are you building for a developer at a terminal, or for an autonomous system ?

The Terminal vs. The Protocol: 5 Counter-Intuitive Truths About the Future of AI Tooling

1. Introduction: The “Vibe-Coding” Crossroads

The software industry is currently navigating a sharp case of strategic whiplash. In late 2024, the Model Context Protocol (MCP) was hailed as the “universal connector” for the agentic era. By March 2026, the pendulum has swung violently toward a “CLI is all you need” backlash. This “March madness” reached a fever pitch with Peter Steinberger (OpenClaw) declaring MCP a mistake, arguing that the humble terminal—a 50-year-old interface—is the only interface an agent requires.

This tension is often framed as a battle between “vibe-coding” (solo developers using loose, terminal-driven workflows) and “agentic engineering” (organizations building scalable, reliable systems). While defenders argue that we are simply in the “messy middle” of a paradigm shift, the narrative that “MCP is dead” ignores the underlying architectural requirements of enterprise AI. We aren’t choosing between a terminal and a protocol; we are choosing between the models we have today and the autonomous systems we are building for tomorrow.

2. Takeaway 1: The Invisible Tax of “Zero-Token” CLIs

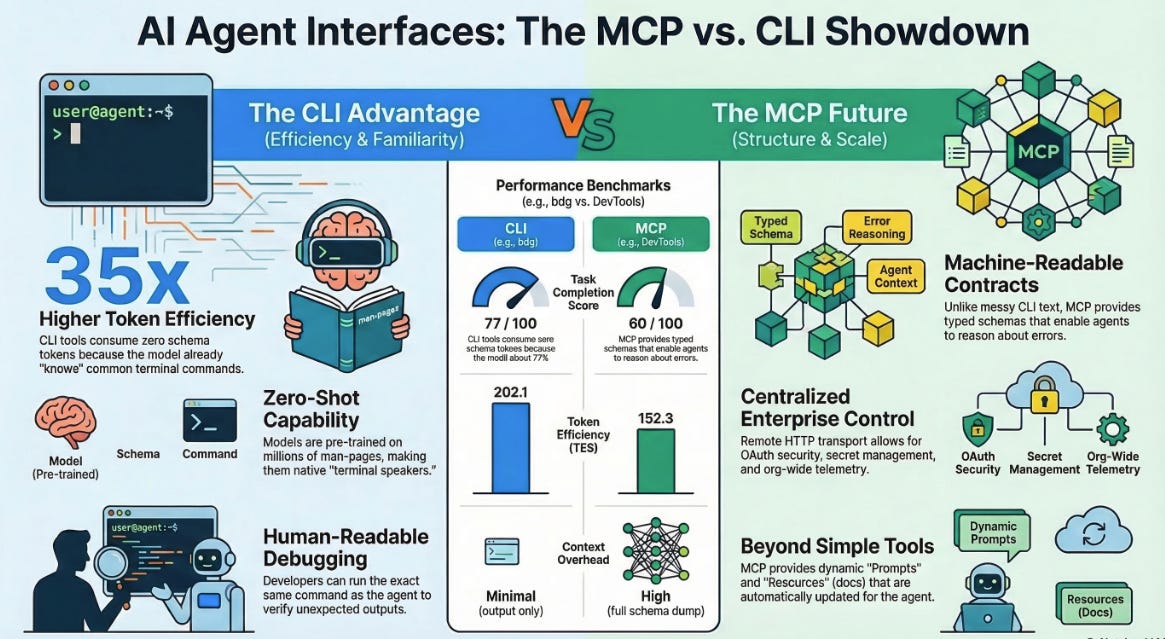

A primary argument for the command-line interface (CLI) is token efficiency. When an agent uses a well-known tool like gh or kubectl, it theoretically requires zero tokens for schema injection because the tool’s usage is baked into the model’s weights. During its training, the model has seen gazillions of documents dealing with such commands. Benchmarks from late 2025 support this, showing a 35x reduction in token usage for specific tasks like Microsoft Intune device listing when using a CLI over a bloated MCP server.

However, for complex tasks, this “zero-token” benefit is a mirage. Consider the “Amazon challenge” found in recent browser automation benchmarks: parsing a full product page via an MCP snapshot costs roughly 52,000 tokens, whereas selective CLI queries cost only 1,200. That is a 43x efficiency gap in favor of the CLI.

But this efficiency comes with a hidden cost: Progressive Context Consumption. Without a structured schema, agents must enter a “Help Loop,” recursively calling --help to understand bespoke tools. This leads to:

Context Fragmentation: As the agent descends the CLI tree, it fills its window with fragmented, untyped documentation.

The Error Spiral: Without a machine-readable contract, the probability of the agent misinterpreting output or using a tool incorrectly increases. As the discourse suggests, “Without giving the agent the full schema up front, the chance of the agent using the toolset correctly will go down.”

3. Takeaway 2: The Terminal as a “50-Year-Old Accident”

The current dominance of CLIs in AI workflows isn’t a result of superior design, but a massive training data advantage. LLMs are “native speakers” of the terminal because the internet is saturated with decades of man pages, Stack Overflow answers, and shell scripts.

As Mike Miller (Courier) points out, the real distinction is where the knowledge lives. LLMs have prior knowledge of the CLI in their weights, while MCP relies entirely on runtime context. There is approximately zero MCP usage in most models’ training data.

For enterprises, this means we are currently “paying for something the model has no prior understanding of.” The terminal became the best interface for agents “accidentally”—it was documented for humans for half a century before agents existed. But relying on “prior knowledge” is a short-term hedge. As we move toward bespoke internal services that the model could never have seen in training, the CLI’s “accidental” advantage evaporates, leaving us with a fragmented mess of untyped binaries.

4. Takeaway 3: It’s Not a Tool, It’s a Contract (The “REST in 1999” Analogy)

Current critiques of MCP often focus on “rough” implementations—zombie Node processes and silent initialization failures. These are symptoms of an infant protocol, not a flawed one. This is REST in 1999; we are in the messy period before OpenAPI provided the standard contract that made web APIs predictable.

The value of MCP lies in the capabilities that CLIs fundamentally cannot replicate:

Elicitation: Unlike a CLI, which struggles with interactive prompts, MCP allows a tool to ask the user for more information mid-execution.

Sampling: This allows a tool to “call back” to the host LLM to perform inference tasks, effectively giving the tool its own “brain” for sub-tasks.

Typed Contracts: While a human can debug a CLI’s inconsistent text output, an agent needs a machine-readable schema to verify its actions. CLIs provide “whatever the maintainer felt like that day,” whereas MCP provides a contract that prevents hallucinations and silent failures.

5. Takeaway 4: The Enterprise Pivot—From Stdio to Streamable HTTP

The “Zombie Process” problem—where users find 100+ orphaned Node processes after a session—is an artifact of “Local MCP” running over stdio. The strategic pivot for organizations is the move to Remote MCP over Streamable HTTP.

This shift turns MCP from a local daemon into enterprise infrastructure.

Operationalization: Instead of managing local binaries on every developer’s machine, tools are hosted and maintained centrally.

The Auth Win: CLIs rely on local AWS or GitHub profiles—a nightmare for security teams. Remote MCP uses OAuth 2.0 client credentials, allowing for token revocation, scoped permissions (like read-only access), and centralized telemetry.

Observability: With a centralized server, teams can see exactly which tools are failing and which are delivering value, something nearly impossible to audit across a fleet of local CLI users.

6. Takeaway 5: Moving Beyond “Cowboy Coding” to Agentic Engineering

The CLI vs. MCP debate is actually a conflict between “solo vibe-coders” and “engineering organizations.” Organizations require Agentic Engineering: a discipline that ensures consistency and quality even when the producer of the software is an agent.

Key to this are MCP Prompts and Resources, which function as server-delivered, dynamic “SKILL.md” files. Charles Chen highlights the power of “Virtual Indices”—dynamically generated documentation that is always up-to-date.

Versus Static Docs: Repo-based

AGENTS.mdfiles require manual syncing and create versioning nightmares.Dynamic Context: Server-delivered prompts can inject real-time system status or pricing data without a separate tool call.

As we saw with recent challenges at Amazon where AI-assisted coding affected real production systems, we can no longer afford “cowboy coding” in the agentic era. We need a standardized way to operationalize knowledge.

Conclusion: The Hybrid Horizon

The future is not a zero-sum game. The effective AI infrastructure strategy is hybrid:

Use CLIs for well-known developer tools (Git, Docker) where the model’s training data provides high accuracy and the “prior knowledge” tax is low.

Adopt MCP for bespoke internal services, enterprise integrations, and any scenario requiring centralized security, telemetry, and typed consistency.

The question for every CTO in 2026 is simple: Are you building for a developer at a terminal, or for an autonomous system that requires a machine-readable constitution? The terminal is the interface of our history; the protocol is the interface of our future.